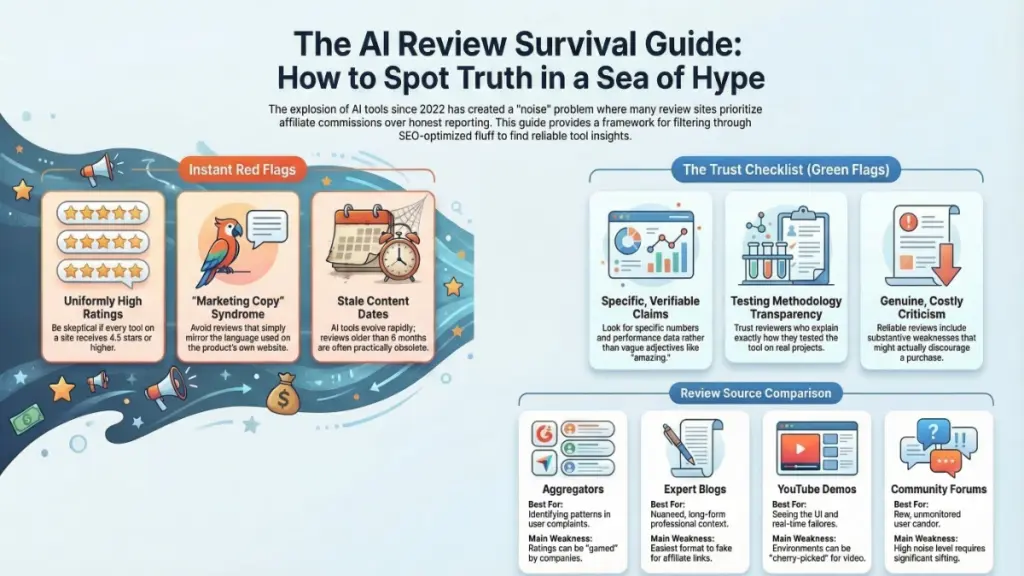

TL;DR: The explosion of AI review sites since 2022 has made tool selection harder, not easier, as most prioritize affiliate commissions over honest evaluation. To find trustworthy recommendations, seek reviews with specific metrics, substantive criticism, transparent testing methods, clear affiliate disclosures, and recent updates. Reliable sources include independent expert blogs, YouTube channels with unedited demos, user platforms like G2 and Reddit for complaint patterns, and analyst firms for enterprise decisions. Watch for red flags like uniform 5-star ratings, recycled marketing language, missing disclosures, and vague pricing discussions. A systematic 60-90 minute research process—scanning sentiment, deep-reading credible sources, analyzing user complaints, checking community forums, and conducting your own trial—helps you avoid costly subscription mistakes and choose tools that actually fit your needs.

The Hidden Problem With AI Tool Reviews

If you’re searching for honest AI tool reviews in 2025, you’ve likely encountered the same frustrating reality that frustrates thousands of professionals daily: most AI review sites are quietly undermining your decision-making process. Not through obvious scams or fraudulent claims, but through sophisticated, well-designed content that appears legitimate until you’ve already committed to an expensive subscription based on misleading recommendations.

The AI tools landscape has transformed dramatically since ChatGPT’s launch in late 2022. What began as a niche technology sector has exploded into a multi-billion dollar industry encompassing thousands of solutions for content creation, image generation, code assistance, customer service automation, and business intelligence. This rapid expansion created an equally explosive growth in AI review websites—many of which prioritize affiliate revenue over genuine user value.

As a Senior Digital Marketing Consultant who has personally tested over 150 marketing and AI productivity tools since the GPT-3 beta era, I’ve developed a critical perspective on this ecosystem. The uncomfortable truth? The proliferation of AI review sites has made informed decision-making more difficult, not easier. For every authentic, thoroughly researched review, there are numerous others crafted by content creators who spent minimal time with a free trial before optimizing their articles for maximum commission conversion.

This comprehensive guide reveals how AI review sites actually operate, identifies the specific signals that distinguish credible sources from promotional content, and provides actionable frameworks for leveraging these resources effectively—without falling victim to the common pitfalls that cost businesses thousands in wasted software investments.

Why AI Review Sites Multiplied Exponentially (And Why Volume Creates Problems)

Understanding the economic forces driving AI review content is essential for developing critical reading skills. When OpenAI released ChatGPT publicly in November 2022, it triggered an unprecedented surge in AI tool review websites. Seemingly overnight, thousands of digital properties began publishing listicles, comparison articles, and “in-depth” evaluations covering every conceivable AI product category.

The Affiliate Commission Economy

The primary business model powering most AI review sites isn’t traditional advertising or subscription revenue—it’s affiliate marketing commissions. AI software companies frequently offer between 20% and 40% recurring commissions to publishers who drive paying customers to their platforms. For premium tools priced at $99-$500 monthly, these percentages generate substantial passive income streams.

This incentive structure creates an inherent conflict of interest. When reviewers are compensated based on conversion rates rather than product quality, content naturally skews toward tools with higher commission rates, better conversion funnels, and more generous affiliate terms—not necessarily superior functionality or value.

Real-world observation confirms this pattern. I’ve tracked multiple instances where tools receiving lukewarm or critical coverage suddenly garnered “best-in-class” recommendations following quiet increases to their affiliate commission structures. While correlation doesn’t prove causation, the frequency of these coincidences has made me appropriately skeptical of uniformly positive coverage.

The Content Quality Crisis

The affiliate model isn’t inherently corrupt—many ethical reviewers transparently disclose relationships while maintaining editorial independence. However, the low barrier to entry for creating AI review content has flooded the ecosystem with superficial, hastily produced articles that prioritize search engine rankings over genuine utility.

The result is information overload: hundreds of similar-sounding reviews for popular tools, often recycling the same feature lists and marketing language without substantive independent testing or critical analysis.

Critical Signals: How to Identify Trustworthy AI Review Platforms

After years of consuming, producing, and critiquing AI tool reviews across multiple formats, I’ve developed a reliable evaluation framework. These seven criteria separate valuable resources from promotional noise:

1. Specific, Quantifiable Claims Over Vague Enthusiasm

Credible reviews anchor their assessments in concrete, verifiable details. Compare these two approaches:

Low-value review: “This AI writing tool produces amazing content incredibly fast with fantastic quality.”

High-value review: “The AI generated a 500-word blog introduction in 8.3 seconds. The output required three minor edits for tone consistency and one factual correction before publication-ready.”

The first example uses impressive-sounding adjectives without measurable substance. The second provides specific metrics that allow readers to evaluate relevance to their own workflows. When reading AI reviews, demand numerical anchors—speed measurements, accuracy percentages, word counts, pricing comparisons, and time-to-value estimates.

2. Substantive Criticism That Risks Relationships

Every software product has limitations, trade-offs, and contextual weaknesses. The depth and honesty of critical analysis reveals reviewer integrity.

Warning sign: Cons sections containing generic, easily dismissible complaints like “the interface could be slightly more intuitive” or “advanced features require a learning curve.”

Positive indicator: Detailed discussions of specific limitations: “The tool struggles with technical subjects requiring specialized terminology, producing generic outputs for healthcare and legal content despite strong marketing copy performance” or “Enterprise pricing jumps 340% between Team and Business tiers, creating a problematic gap for growing agencies.”

Genuine criticism occasionally costs affiliate relationships—reviewers who prioritize long-term credibility over short-term commissions aren’t afraid to mention deal-breaking limitations.

3. Transparent Testing Methodologies

The difference between superficial and substantive reviews often lies in testing depth. Credible sources explicitly describe:

- Duration of evaluation: Was the tool tested for several hours, several days, or integrated into actual workflows for weeks?

- Use case specificity: Did testing involve realistic professional scenarios or only demo environments optimized to showcase strengths?

- Comparative frameworks: Were multiple tools evaluated against identical tasks for direct performance comparison?

- Failure documentation: Were unsuccessful outputs, errors, or limitations recorded and shared?

Reviews lacking methodology transparency should trigger skepticism. If you cannot determine how extensively a reviewer actually used a tool, assume minimal hands-on experience.

4. Prominent Affiliate Disclosure

Legal requirements (particularly FTC guidelines in the United States) mandate clear disclosure of material relationships that could influence recommendations. Ethical AI review sites place these disclosures prominently—typically near article introductions or recommendation summaries—not buried in footer text or separate policy pages.

Absence of disclosure where affiliate links clearly exist suggests operational practices that may extend to other ethical shortcuts.

5. Aggressive Content Updating

AI tools evolve rapidly. Features launch, pricing changes, performance improves or degrades, and competitive positioning shifts quarterly. Reviews from 2023 describing tools available today may reference obsolete interfaces, discontinued capabilities, or outdated pricing structures.

Trustworthy review sites implement systematic update protocols:

- Visible publication and last-modified dates

- Update notifications describing what changed and when

- Version-specific evaluations when major updates alter product fundamentals

- Archive or deprecation notices for reviews no longer representative of current offerings

Stale content ranking well in search results represents one of the most insidious hazards in AI tool research—readers unknowingly base decisions on obsolete information.

6. Balanced Rating Distributions

Sites where every product receives 4.5-5 star ratings demonstrate either extraordinary selectivity (reviewing only exceptional tools) or rating inflation that renders their evaluation scales meaningless. Authentic review platforms show realistic distributions: some excellent tools, many adequate solutions, and genuinely poor products receiving appropriately critical coverage.

7. Coverage of Practical Implementation Factors

Beyond feature lists and performance benchmarks, credible reviews address operational realities that determine long-term success:

- Customer support responsiveness and quality

- Data privacy policies and security certifications

- Cancellation procedures and refund policies

- Integration stability and API reliability

- Onboarding complexity and training resource quality

These “unsexy” topics significantly impact user experience but rarely appear in reviews optimized primarily for affiliate conversion.

AI Review Site Categories: Strategic Applications for Different Research Needs

Understanding the distinct formats and strengths of various AI review platforms enables more effective research strategies. Each category serves specific purposes within a comprehensive evaluation process.

Aggregator and Comparison Platforms (G2, Capterra, Trustpilot, Software Advice)

Primary advantage: Volume and diversity of user perspectives. These platforms collect thousands of reviews from verified users across company sizes, industries, and use cases.

Optimal use case: Pattern identification in user complaints. While individual reviews may reflect unique circumstances or user errors, recurring themes across multiple negative reviews signal genuine product limitations. Specifically search low-rated reviews for mentions of:

- Customer support failures

- Billing disputes or unexpected charges

- Performance degradation at scale

- Feature promises unfulfilled in actual usage

- Integration breakage or data loss incidents

Critical limitations: Review gaming remains prevalent—some companies actively incentivize positive reviews through discounts, extended trials, or direct requests. Rating systems often flatten meaningful distinctions, and verified purchase requirements vary in rigor.

Independent Expert Blogs and Niche Publications

Primary advantage: Deep domain expertise and contextual evaluation within specific professional workflows.

Optimal use case: Complex tool evaluation requiring nuanced understanding of industry-specific requirements. Expert reviewers with genuine client work experience can identify subtle capabilities or limitations that generalist users miss.

Evaluation criteria for expert credibility:

- References to specific client projects or professional use cases (not hypothetical scenarios)

- Screenshots or recordings of actual tool outputs (not manufacturer-provided marketing materials)

- Acknowledgment of tools that didn’t work for specific situations

- Technical depth appropriate to the subject matter

- Consistent publication history demonstrating ongoing engagement with the category

Critical limitations: This format is easily imitated. Anyone can claim expertise and produce polished-looking reviews without substantive background. Verify claimed credentials through professional profiles, portfolio evidence, or community recognition.

YouTube Review Channels and Video Demonstrations

Primary advantage: Difficulty of faking hands-on usage. Video format reveals actual user interfaces, real-time processing speeds, unedited outputs, and workflow friction points that written reviews can obscure.

Optimal use case: Evaluating user experience, interface design, and real-time performance characteristics. Screen recordings showing complete workflows from login to output generation provide transparent evidence of tool functionality.

Quality indicators:

- Demonstrations including unsuccessful attempts or error states alongside successes

- Real-time processing without cuts that could hide delays

- Comparison of multiple outputs from identical prompts

- Discussion of pricing and value propositions

- Disclosure of sponsorship or affiliate relationships

Critical limitations: Production values can obscure substance. Highly polished videos with professional editing may prioritize entertainment over evaluation. Channels showing only impressive results likely optimize their testing environments rather than representing realistic usage.

Community Forums and Social Platforms (Reddit, Discord, LinkedIn Groups, Tool-Specific Communities)

Primary advantage: Unfiltered candor from users without commercial incentives. Community discussions feature spontaneous complaints, unprompted recommendations, and detailed troubleshooting exchanges.

Optimal use case: Discovering edge cases, recent changes, and contextual limitations not covered in formal reviews. Users posting about tools typically do so when experiencing problems or genuine enthusiasm—both signals provide valuable intelligence.

High-value sources:

- r/artificial, r/MachineLearning, r/ChatGPT for general AI tool discussions

- r/marketing, r/content_marketing, r/SEO for professional use case perspectives

- Tool-specific subreddits (r/midjourney, r/StableDiffusion, r/Notion, etc.)

- Industry Discords and Slack communities with active AI tool channels

Critical limitations: Information is unstructured, unverified, and requires significant sifting. Community sentiment can skew negative (people post more frequently about problems than satisfactory experiences) or be influenced by vocal minorities with atypical requirements.

Professional Analyst Firms (Gartner, Forrester, IDC)

Primary advantage: Rigorous methodology, vendor-neutral evaluation frameworks, and enterprise-scale testing resources.

Optimal use case: High-stakes procurement decisions involving significant investment or organizational risk. Analyst reports provide comparative frameworks, total cost of ownership analyses, and strategic fit assessments unavailable elsewhere.

Critical limitations: Access requires substantial subscription fees. Coverage focuses on established vendors with enterprise traction, often missing emerging tools or specialized solutions. Evaluation cycles may lag rapidly evolving product capabilities.

Red Flags: Warning Signs That Should Trigger Immediate Skepticism

These patterns indicate review content prioritizing conversion over accuracy. Treat them as serious signals to seek alternative sources:

Uniformly High Ratings Across Diverse Tools

Sites where 90%+ of reviewed products receive 4.5-5 star ratings demonstrate either impossible selectivity or evaluation criteria so lenient as to be meaningless. Realistic assessment produces varied outcomes—some excellent tools, many adequate solutions, and genuinely poor products receiving critical coverage.

Systematically Skewed Pros-to-Cons Ratios

While exceptional tools occasionally deserve predominantly positive coverage, sites where every review features brief, generic cons sections following extensive pros lists are likely constructing criticism to satisfy structural requirements rather than genuine evaluation. “Cons” written to be easily dismissed by readers (“minor learning curve,” “premium pricing reflects premium quality”) protect affiliate relationships rather than inform decisions.

Persistent Promotion of High-Commission Tools

Certain AI tools offer affiliate commissions significantly above category norms (50%+ recurring, or flat bounties exceeding $100 per conversion). If a review site consistently recommends the same 2-3 solutions across multiple categories—particularly newer tools with limited track records—investigate whether those companies are known for aggressive affiliate programs.

Pricing Discussion Without Value Assessment

Reviews mentioning pricing without explicitly evaluating whether costs are justified by delivered value omit essential decision-making information. Statements like “pricing starts at $49/month” without subsequent analysis of ROI for different user types, comparison to functionally similar alternatives, or discussion of pricing tier appropriateness indicate content optimized for conversion rather than education.

Marketing Language Replication

Reviews recycling phrasing from vendor websites (“revolutionary AI technology,” “game-changing productivity boost,” “industry-leading innovation”) suggest heavy reliance on manufacturer-provided materials rather than independent testing. Original analysis produces original language—promotional replication indicates promotional intent.

Absence of Update History

AI tools releasing major updates monthly require correspondingly current reviews. Sites without visible publication dates, update notifications, or version-specific evaluations may be ranking obsolete content that misleads readers about current capabilities and limitations.

Missing Disclosure of Financial Relationships

FTC guidelines and similar international regulations require clear disclosure of affiliate relationships and sponsored content. Sites earning commissions without transparent acknowledgment operate outside compliance norms, suggesting broader ethical flexibility.

The Professional’s Research Workflow: A Systematic Evaluation Process

This structured approach maximizes information value while minimizing time investment. I’ve refined this process through years of client consultation and personal tool evaluation:

Phase 1: Broad Sentiment Mapping (15 minutes)

Conduct initial searches combining the tool name with “review,” “alternative,” and “vs [competitor].” Skim first-page results without deep reading, noting:

- Overall sentiment distribution (predominantly positive, mixed, or critical)

- Specific complaints appearing across multiple sources

- Tools frequently mentioned as alternatives

- Recent news or controversy (pricing changes, feature removals, security incidents)

Create a simple document capturing recurring themes—don’t judge validity yet, just document frequency.

Phase 2: Deep Evaluation of Credible Sources (30 minutes)

Select 2-3 sources from your initial scan that demonstrated the positive signals identified earlier (specific claims, substantive criticism, methodology transparency, prominent disclosure). Read these thoroughly, focusing on:

- Detailed feature descriptions relevant to your specific use case

- Documented limitations that might affect your workflow

- Pricing analysis appropriate to your budget and scale

- Comparison points with tools you’re already considering or using

Take structured notes organized by decision criteria (features, pricing, integration, support, etc.).

Phase 3: User Review Pattern Analysis (20 minutes)

Visit G2, Capterra, or Trustpilot and sort reviews by lowest ratings. Read 10-15 critical reviews from the past 6 months, specifically seeking:

- Recurring technical issues or bugs

- Customer service complaints (response time, resolution quality, refund handling)

- Billing problems or unexpected charges

- Feature promises unfulfilled in actual usage

- Performance degradation reports

Document patterns appearing in 3+ reviews—these represent genuine risk factors.

Phase 4: Community Intelligence Gathering (20 minutes)

Search Reddit and relevant Discord/Slack communities for the tool name combined with terms like “issue,” “problem,” “alternative,” “worth it,” or “recommend.” Focus on posts from the past 3-6 months.

Community discussions reveal:

- Recent changes in quality or pricing

- Edge cases and unusual use case limitations

- Workarounds for documented problems

- Competitive alternatives users are actually switching to

Phase 5: Direct Validation (Variable)

If the tool offers a free trial or freemium tier, conduct your own limited testing:

- Design a realistic task matching your actual workflow (not the demo scenario)

- Document time-to-completion, output quality, and iteration requirements

- Test customer support responsiveness with a pre-sales question

- Evaluate the cancellation process before committing payment information

This systematic process requires approximately 60-90 minutes but prevents costly subscription commitments to inadequate tools. The return on time invested is substantial—I’ve guided clients away from $10,000+ annual commitments to unsuitable platforms and helped others confidently adopt tools they were initially hesitant to try.

What Excellence Looks Like: Characteristics of Superior AI Review Platforms

Despite widespread quality issues, genuinely valuable AI review resources exist. The best demonstrate these operational standards:

Rigorous Testing Frameworks

Rather than impressionistic evaluation, superior sites implement standardized testing protocols. Each tool undergoes identical task sequences enabling direct performance comparison. This systematic approach produces more reliable rankings and reduces gaming opportunities.

Living Document Philosophy

Top-tier review sites treat content as continuously evolving. Major product updates trigger immediate review revisions with clear change documentation. Some maintain version-specific evaluations allowing readers to track evolution over time.

Editorial Independence Over Revenue

The most trusted reviewers occasionally publish updated assessments downgrading previously recommended tools when quality deteriorates or competitors surpass them—even when these changes negatively impact affiliate income. This long-term credibility prioritization over short-term revenue signals genuine commitment to user value.

Comprehensive Operational Coverage

Excellence extends beyond feature evaluation to address implementation realities: data security certifications, API documentation quality, customer support channel responsiveness, account management practices, and ethical AI policies. These factors determine long-term success but rarely appear in conversion-optimized content.

Transparent Correction Practices

Credible sources promptly acknowledge and correct factual errors, update recommendations based on new information, and engage constructively with reader feedback challenging their assessments.

Conclusion: Navigating AI Review Sites in 2025

The current AI review ecosystem presents a paradox: unprecedented information availability coexists with unprecedented difficulty accessing reliable guidance. The tools for making informed decisions exist, but extracting signal from noise requires developed critical reading skills and systematic research approaches.

The fundamental principles for effective navigation:

Maintain healthy skepticism toward uniformly positive coverage. Your intuition about reviews feeling “too promotional” or suspiciously vague is usually accurate. Trust these instincts and seek additional sources.

Diversify information sources. No single review type provides complete perspective. Combine expert analysis, aggregated user feedback, community intelligence, and direct testing for balanced understanding.

Prioritize recent, specific, critical content. Reviews documenting actual limitations with recent timestamps provide more value than comprehensive feature lists from outdated sources.

Invest time in systematic evaluation. The hour spent in structured research prevents months of frustration with inadequate tools and wasted subscription costs.

The AI tools landscape will continue evolving rapidly, and review quality will likely remain uneven. However, readers equipped with critical evaluation frameworks and systematic research processes can consistently identify genuine value amid promotional noise.

Frequently Asked Questions About AI Review Sites

Are AI review sites financially incentivized to recommend specific tools?

Most operate on affiliate commission models, earning 20-40% recurring revenue from referred subscriptions. This doesn’t automatically invalidate recommendations, but creates structural incentives favoring high-commission, high-converting tools over optimal solutions for specific use cases. Transparent disclosure and substantive critical analysis indicate sites managing this conflict responsibly.

Which AI review platforms offer the most reliable guidance?

Independent expert blogs with transparent testing methodologies and documented professional experience provide the most nuanced analysis for complex evaluations. For real-world operational insights, user-generated platforms (G2, Capterra) and unfiltered community discussions (Reddit, Discord) reveal patterns formal reviews often miss. YouTube demonstrations offer transparency difficult to fake in written content.

How can I identify outdated AI tool reviews?

Verify both publication dates and last-updated timestamps. In the current AI landscape, content older than 6-12 months may reference obsolete features, pricing, or performance characteristics. Cross-reference mentioned capabilities against the vendor’s current website, and prioritize sources with documented update histories.

Should I trust user reviews on enterprise software platforms?

Approach with informed caution. While volume provides valuable signal, particularly in negative review patterns, be aware that companies sometimes incentivize positive reviews. Focus analysis on specific, detailed complaints rather than star ratings alone. Reviews describing exact problems with technical details generally prove more reliable than generic praise.

What’s the most effective approach for evaluating AI tools beyond reading reviews?

Implement structured free trial testing using realistic tasks from your actual workflow—not optimized demo scenarios. Document time investment, output quality, iteration requirements, and support responsiveness. This direct experience, combined with systematic review research, produces the most reliable evaluation foundation.

How do I spot AI review sites that prioritize affiliate revenue over accuracy?

Warning signs include: uniformly high ratings across diverse tools, consistently brief/generic criticism sections, heavy promotion of known high-commission products, absence of pricing value analysis, recycling of vendor marketing language, missing financial relationship disclosures, and stale content without update histories.