TL;DR: Most AI tools’ “ethical” claims are marketing fluff. Evaluate platforms on data transparency, privacy, bias mitigation, and human oversight. Claude leads on ethics; ChatGPT is powerful but complex; Jasper works for enterprises; Copy.ai is fine for low-stakes tasks. Never input sensitive data without verified protections, and always human-review AI outputs—because you bear the consequences when tools cut corners.

The Ethics Gap in AI Marketing

If you’ve been evaluating AI tools for your business lately, you’ve undoubtedly encountered the same relentless messaging: every platform claims to be “responsible,” “safe,” “transparent,” and “ethical.” These terms have become the new standard in AI marketing copy, replacing previous buzzwords like “cutting-edge” and “revolutionary.” But after four years of professional AI tool testing and integration, I’ve discovered a troubling reality—the chasm between what AI companies promise about their ethical standards and what they actually deliver is vast and growing.

I’m James G. Cunningham, a Senior Digital Marketing Consultant and AI Tools Specialist based in Austin, Texas. Since the GPT-3 beta days of 2021, I’ve tested over 150 AI platforms, managed comprehensive MarTech stacks for clients ranging from solo consultants to mid-market enterprises, and learned some expensive lessons along the way. My most costly mistake? Signing a 12-month contract with an AI platform that showcased a stunning demo but buried genuinely concerning data harvesting practices deep within their terms of service. That $5,000 mistake taught me to look far beyond feature lists and marketing gloss.

In this comprehensive guide to ethical AI tools reviews, I’m going to reveal what truly matters when evaluating AI platforms for ethical standards, share my proprietary framework for assessing responsible AI claims, and provide honest, unfiltered assessments of today’s major players. This isn’t a vendor-sponsored comparison or affiliate-driven roundup. This is what I actually think after years of hands-on implementation.

Why Ethical AI Tools Reviews Are Critical in 2024

The stakes for AI ethics have never been higher. Artificial intelligence tools have evolved from experimental novelties to core infrastructure handling sensitive customer data, generating content that audiences trust implicitly, and making automated recommendations that impact real people’s lives and livelihoods. When the AI platforms you choose cut ethical corners, you absorb the downstream consequences—not the vendor who sold you the tool.

I learned this lesson painfully while helping a mid-size e-commerce client build their AI-assisted customer service infrastructure. We selected a popular chatbot platform based on surface-level features and pricing, but I failed to conduct deep due diligence on their model training methodology. The revelation came when a competitor’s investigative blog post exposed that the platform’s training data included scraped user conversations from third-party sources without clear consent mechanisms. My client discovered this publicly, creating a crisis of trust that took months to repair.

Ethical AI implementation isn’t merely a philosophical preference or corporate social responsibility checkbox. It’s fundamental business risk management. Every AI tool evaluation must answer these critical questions: How was this model trained and on what data? What happens to your proprietary inputs? Are the outputs transparent, explainable, and auditable? Does the company possess genuine accountability structures, or merely a glossy webpage titled “Our Commitment to Responsible AI”?

The encouraging news: a growing number of companies are investing seriously in ethical AI infrastructure. The frustrating reality: identifying them requires digging beneath marketing surfaces that are intentionally designed to obscure.

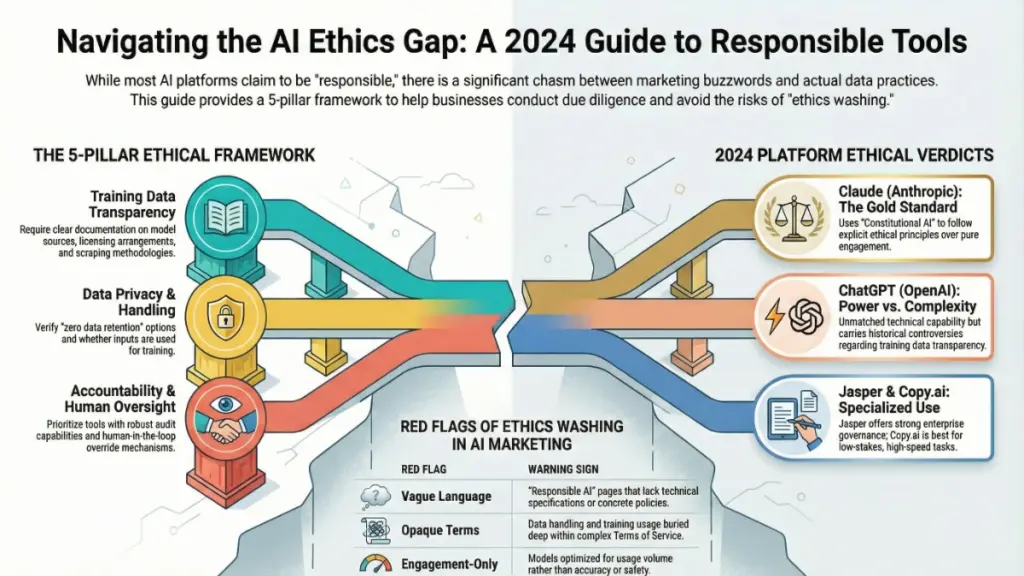

My 5-Pillar Framework for Ethical AI Tools Reviews

Before diving into specific platform assessments, let me share the evaluation framework I’ve developed and refined across dozens of ethical AI tools reviews. While no framework is perfect, this systematic approach provides consistent criteria for separating genuine ethical commitment from sophisticated “ethics washing.”

Pillar 1: Training Data Transparency

Responsible AI companies should provide clear, accessible information about their model’s training data sources, licensing arrangements, and scraping methodologies. Anthropic exemplifies this standard by publishing detailed model cards and comprehensive usage policy documentation explaining their training approaches. OpenAI has improved significantly since 2022, though gaps remain. Many smaller tools, however, deflect or ignore these questions entirely—which itself reveals volumes about their practices.

Pillar 2: Data Privacy and Handling Protocols

What happens to the inputs you feed into AI tools? Are your prompts, proprietary business information, or customer data used to further train the model? For organizations handling sensitive information, these questions are existential. I specifically verify whether platforms offer “zero data retention” options, enterprise privacy tiers, or data processing agreements. Claude’s API, for instance, explicitly does not use customer inputs for model training by default. ChatGPT’s free tier historically utilized conversations for training—opt-out is now available, though many users remain unaware.

Pillar 3: Bias Mitigation and Content Safeguards

Truly ethical AI tools acknowledge inherent model biases and implement visible, documented systems for reducing harmful outputs. Perfection is impossible—all models carry biases—but genuine commitment means active wrestling with these challenges, not publishing superficial content policies and declaring victory.

Pillar 4: Accountability and Human Oversight Mechanisms

Enterprise-grade AI tools distinguish themselves through robust audit capabilities. Can you track what outputs were generated and why? Can human reviewers override AI decisions? For marketing applications requiring brand safety at scale, these oversight features are non-negotiable.

Pillar 5: Business Model Alignment with Ethical Claims

This criterion sounds abstract but proves highly practical. Companies monetizing through engagement maximization face structural conflicts with ethical AI commitments—they’re incentivized to keep users hooked regardless of content quality. Subscription-based models demonstrate better alignment, as their success depends on genuine long-term utility and trust rather than addiction metrics.

Comprehensive Ethical AI Tools Reviews: Platform Analysis

Claude (Anthropic) — The Current Gold Standard for Responsible AI

Full disclosure: I use Claude daily in my consultancy work. However, I’ve systematically pressure-tested it against competitors, and the results consistently favor Anthropic’s approach.

Anthropic has pioneered “Constitutional AI”—training models to follow explicit ethical principles rather than optimizing purely for engagement or helpfulness. In practical application, this manifests as a model that challenges ethically questionable requests, explains its reasoning when declining tasks, and behaves like a thoughtful collaborator rather than an obedient yes-machine.

For content marketing applications, this ethical friction proves invaluable. I once requested Claude’s assistance with a manipulative urgency-based email sequence—the type claiming “only 3 spots remaining” when inventory was actually abundant. Claude completed the technical structure but proactively flagged the honesty concerns, suggesting alternative approaches that maintained persuasive impact without deception. This kind of ethical guardrail is precisely what brands need when AI represents their voice to customers.

Documentation and Transparency: Anthropic publishes extensive usage policies, detailed model cards, and ongoing safety research. Their privacy terms are cleaner than industry averages, with strong default protections for API users.

Limitations: Claude occasionally exhibits excessive caution, creating friction on requests that are genuinely appropriate. This can frustrate users seeking seamless workflow integration. Personally, I prefer tools that err toward ethical conservatism rather than those generating any requested content without consideration.

Pricing Structure: Claude Pro costs $20/month for individual users, with transparent team and API tier pricing. No opaque “contact sales” requirements for standard plans—a refreshing contrast to industry norms.

ChatGPT (OpenAI) — Powerful Capabilities, Complex Ethics

Straight talk: ChatGPT represents extraordinary technical achievement, and I use it regularly for appropriate applications. However, OpenAI presents genuine ethical tensions that most reviews gloss over or ignore entirely.

The company has navigated high-profile governance crises, made ambitious AGI safety commitments while simultaneously racing to commercialize products, and faced multiple training data controversies. None of this necessarily renders their tool unethical—but context matters for informed decision-making.

Practical Improvements: ChatGPT’s data handling has evolved positively. Users can now opt out of conversation-based training, and enterprise plans offer substantive privacy guarantees. GPT-4 generates consistently high-quality outputs with unmatched capability breadth.

Critical Cautions: The default model tends toward excessive agreeableness, sometimes prioritizing user satisfaction over accuracy. For high-stakes content generation, this produces confident-sounding but potentially incorrect information. Rigorous fact-checking remains essential.

Verdict: ChatGPT remains excellent for most marketing applications—provided users understand the broader organizational context and maintain active critical engagement with outputs.

Jasper — Enterprise Governance Strength, Transparency Gaps

Jasper has invested substantially in enterprise-grade content governance infrastructure. Their platform offers sophisticated brand voice controls, compliance workflows, and team-level oversight tools that genuinely serve large organizations with strict content policies. Responsive customer support provides crucial safety nets when issues arise.

Ethical Concerns: Jasper’s primary transparency gap involves underlying model training and infrastructure-level data practices. The platform operates on third-party models (primarily OpenAI’s), meaning their ethical commitments partially depend on upstream vendor decisions beyond their direct control. They’re transparent about this dependency when asked—but the limitation warrants awareness.

Best Fit: Content teams of 10+ members requiring structured governance workflows will find Jasper’s team features genuinely impressive. For solo marketers, the pricing ($49–$125/month depending on tier) proves difficult to justify against alternatives.

Copy.ai — Speed-Focused, Ethically Light

Copy.ai delivers exceptional speed and ease-of-use for high-volume marketing copy generation. However, ethical AI tools reviews must acknowledge its thinner infrastructure regarding responsible AI practices.

Documentation Gaps: Training data transparency, bias mitigation strategies, and model governance documentation remain limited. Support responsiveness to data practice inquiries has proven inconsistent in my experience.

Risk Assessment: This doesn’t render Copy.ai unsuitable—it means restricting usage to non-sensitive applications, minimizing input data specificity, and treating all outputs as first drafts requiring human review. Appropriate for quick social media copy or ad variations. Inappropriate for customer data processing or regulated industries (health, finance, legal).

Red Flags: Identifying Ethics Washing in AI Marketing

After reviewing dozens of platforms, I’ve developed a comprehensive warning system for identifying when AI ethics claims exceed actual commitment:

Vague “Responsible AI” Pages Without Substance

If ethics documentation consists entirely of aspirational language without concrete policies, technical specifications, or accountability mechanisms, proceed with caution. Writing a “We Believe in Responsible AI” blog post requires 20 minutes. Building actual safety infrastructure requires years.

Opaque Data Handling Terms

When information about training data usage is buried in page 14 of terms of service, or when clear answers prove elusive, ethical concerns are warranted. Responsible companies prominently display these policies because they’re proud of their protections.

Engagement-Only Optimization

Tools designed purely to maximize usage duration and content generation volume create structural conflicts with ethical AI. These systems subtly push outputs toward sensationalism rather than accuracy—problems no content filter fully resolves.

Unexplainable Decision-Making

Any tool unable to articulate why it generated specific outputs or made particular decisions lacks fundamental accountability infrastructure. Explainability isn’t optional for ethical AI deployment.

Implementing Ethical AI: Beyond Tool Selection

Here’s what conventional ethical AI tools reviews miss: even the most responsibly designed platform can be used unethically. The tool represents only half the equation.

Data Handling Best Practices

Never paste sensitive customer data into AI tools without verified enterprise-grade protections. I’ve repeatedly witnessed teams dump CRM exports into ChatGPT for “faster data cleaning”—creating massive data privacy violations and regulatory risks.

Transparency and Disclosure

Disclose AI assistance where relevant. Marketing clients deserve knowledge when content is AI-generated. Audiences benefit from transparency about AI-assisted articles. While not yet legally required in most jurisdictions, proactive disclosure builds long-term trust and anticipates emerging regulations.

Human Review Requirements

Treat all AI outputs as drafts requiring human review for accuracy, brand alignment, and ethical considerations before publication. The moment AI-generated content bypasses human oversight is the moment liability accumulates.

Internal AI Governance Policies

Develop organizational content policies for AI usage. I’ve assisted approximately a dozen companies in creating these frameworks over the past two years, and every organization has benefited from proactive implementation. Even a single-page document covering approved use cases, prohibited inputs, and review requirements prevents significant future complications.

Conclusion: Your Ethical AI Roadmap

Comprehensive ethical AI tools reviews must transcend superficial feature comparisons and output quality assessments. The platforms you integrate fundamentally shape your brand identity, data practices, and organizational values—whether intentionally or not.

Honest Summary:

- Claude (Anthropic) currently leads in ethics-first design, with research and documentation demonstrating commitment beyond marketing

- ChatGPT (OpenAI) offers unmatched power with real-world complexity requiring informed usage

- Jasper provides enterprise solidity with upstream dependency limitations

- Copy.ai serves low-stakes speed applications adequately

The Critical Takeaway: Never outsource ethical judgment to vendor marketing teams. Read documentation thoroughly, ask difficult questions about data handling, and build mandatory human review into all workflows. The AI tools market is maturing rapidly—companies genuinely investing in responsible AI will earn lasting loyalty. Those cutting corners will ultimately cost more than their subscription fees.

Frequently Asked Questions About Ethical AI Tools

What defines an “ethical” AI tool in practice? Ethical AI tools demonstrate transparency regarding training data sources, maintain clear user-friendly privacy policies, implement documented bias mitigation, support human oversight and accountability, and operate business models that don’t incentivize harmful outputs. This combination of technical, policy, and structural factors distinguishes genuine commitment from marketing rhetoric.

Is ChatGPT ethically appropriate for business use? The answer requires nuance. ChatGPT proves generally safe for most business applications when using enterprise tiers with appropriate data protections and maintaining human output review. Broader questions about OpenAI’s governance and training practices provide important context without necessarily rendering the tool inappropriate—they simply inform responsible usage.

Must businesses disclose AI-generated content? Legal requirements vary by jurisdiction and context. From practical and ethical standpoints, however, transparency with audiences and clients regarding AI assistance builds long-term trust and positions organizations ahead of emerging regulatory requirements. When uncertain, disclosure is the prudent choice.

What data categories should never enter AI tools? Avoid inputting personally identifiable customer information, financial data, health information, proprietary trade secrets, or any data governed by GDPR, HIPAA, or similar regulations unless the tool has confirmed enterprise-grade protections and executed formal data processing agreements.

How frequently should AI tool ethics be re-evaluated? Minimum annual review is essential, though six-month cycles are more realistic given rapid industry evolution. Corporate acquisitions, policy modifications, and competitive improvements occur constantly. Last year’s most ethical option may not retain that position today.