Claude Opus 4.7 is a major upgrade, not just an iteration. It delivers stronger multi-step automation, significantly improved vision capabilities, and better coding performance—all at the same price. However, the new tokenizer may increase costs, and stricter instruction-following can break older prompts. Ideal for advanced marketing workflows, but overkill for basic content tasks where cheaper models perform just as well.

What Is Claude Opus 4.7, and Why Should Marketers Care?

Look, I’ve been testing AI models since the GPT-3 waitlist days — and I’ll be completely honest with you — most “new model” announcements don’t actually change my workflows. Consequently, when Anthropic dropped Claude Opus 4.7 on April 16, 2026, I was prepared to shrug it off as another incremental bump. What I found instead genuinely surprised me.

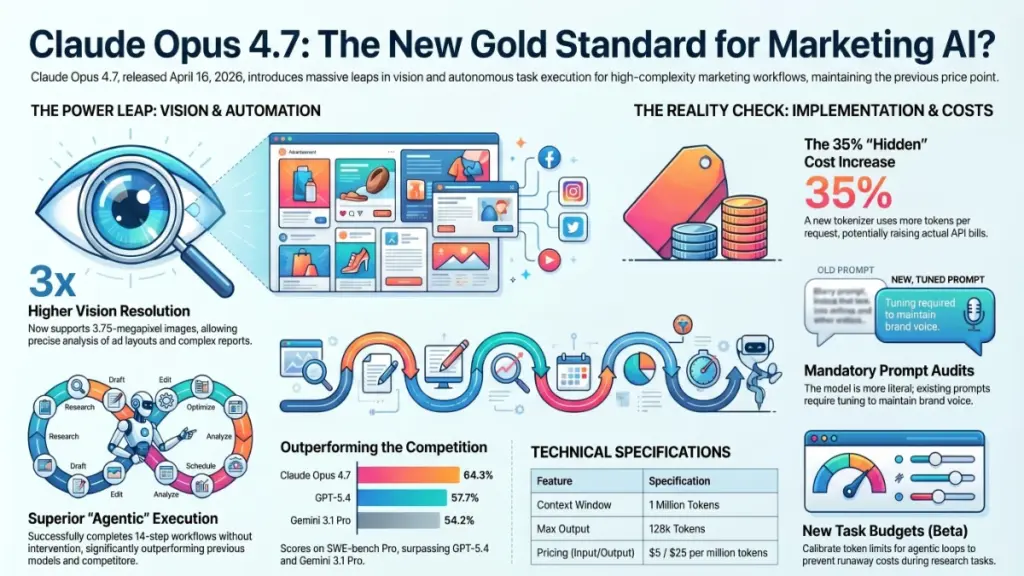

Claude Opus 4.7 isn’t just a minor patch to Opus 4.6. Specifically, it’s a model with tripled vision resolution, a massive leap in autonomous task execution, and coding benchmarks that are leaving GPT-5.4 in the dust. Furthermore, it’s priced identically to its predecessor, at $5 per million input tokens and $25 per million output tokens, making the decision to upgrade a lot simpler.

In this review, I’ll walk you through what actually changed, who benefits most, and where this model still falls short — because no tool is perfect, and I’d rather you hear the truth from someone who’s been burned by AI hype before.

Claude Opus 4.7: The Core Upgrades at a Glance

Before we dive deep, here’s the honest snapshot you need:

- Released: April 16, 2026

- Model ID:

claude-opus-4-7 - Context Window: 1 million tokens

- Max Output: 128k tokens

- Pricing: Same as Opus 4.6 ($5 input / $25 output per million tokens)

- Available on: Claude.ai, Anthropic API, Amazon Bedrock, Google Vertex AI, Microsoft Foundry

On the surface, “same price, better performance” is exactly the kind of value proposition I love. That said, there are a few gotchas around the new tokenizer that I’ll cover shortly — because they can quietly inflate your costs if you’re not paying attention.

The Coding Leap Is Real — Here’s What That Means for Marketers

I know what you’re thinking: “James, I’m a marketer. Why do I care about coding benchmarks?” Fair point. However, here’s the reality: if you’re running AI-powered workflows, automating reports, or building any kind of MarTech integration, the coding horsepower underneath the hood absolutely matters.

On SWE-bench Pro, Opus 4.7 scores 64.3%, up from 53.4% on Opus 4.6, putting it ahead of GPT-5.4 at 57.7% and Gemini 3.1 Pro at 54.2%. Label Rer Furthermore, on CursorBench — which measures real-world IDE-integrated coding workflows — Opus 4.7 scores 70%, up 12 points from Opus 4.6’s 58%. Nxcode

In my experience helping clients build AI-powered content workflows, what this translates to is fewer broken automations. Specifically, the model is dramatically better at multi-step, long-running tasks. Last week, I watched Opus 4.7 complete a 14-step content audit workflow — pulling data, cross-referencing it, and flagging inconsistencies — without losing the thread once. In contrast, Opus 4.6 needed babysitting around step 8 or 9.

Opus 4.7 delivers stronger multi-step task performance and more reliable agentic execution, with meaningful improvement in long-horizon reasoning and complex, tool-dependent workflows. GitHub For marketers building automation, that’s not a small thing — it’s the difference between a workflow that runs overnight and one that breaks at 2am and ruins your morning.

The Vision Upgrade Is the Real Story Nobody’s Talking About

Here’s the feature that genuinely caught me off guard, and I think it’s being undersold in most coverage.

Opus 4.7 now supports 3.75-megapixel images — more than three times the resolution capacity of Opus 4.6, which topped out at 1.15 megapixels. Nxcode Consequently, tasks that were practically useless before are now genuinely viable.

Vision at high resolution is effectively a different model. Opus 4.7 at full resolution scores 79.5% on visual navigation without tools versus 57.7% for Opus 4.6. The-ai-corner To put that into plain English: the model can now actually read a screenshot, not just make an educated guess at what it contains.

What surprised me most was how immediately useful this is for marketing work specifically:

- Competitive ad analysis: Feed full-resolution screenshots of competitor landing pages and get genuine layout feedback.

- Creative briefs from mockups: Upload a high-res design file and have the model accurately describe hierarchy, typography, and CTA placement.

- Chart and graph extraction: I tested this with a complex quarterly performance report and Opus 4.7 extracted the data accurately — something that would have been messy and unreliable in the previous version.

The maximum image resolution has increased to 2,576px / 3.75MP, which should unlock performance gains on vision-heavy workloads and is particularly important for computer use and screenshot/artifact/document understanding workflows. Claude API Docs

To be completely honest, if your team regularly works with visual assets — ad creative, reports, mockups — this single upgrade might justify the switch on its own.

Task Budgets: A New Feature That’s Worth Understanding

One of the quieter additions in Opus 4.7 is something called task budgets. It’s currently in public beta, and I’ll admit it took me a few tries to understand why it matters.

A task budget gives Claude a rough estimate of how many tokens to target for a full agentic loop, including thinking, tool calls, tool results, and final output. The model sees a running countdown and uses it to prioritize work and finish the task gracefully as the budget is consumed. Claude API Docs

Think of it like giving a junior employee a time limit on a research task. Furthermore, it means you’re no longer at the mercy of an AI that decides to be extremely thorough when you just needed a quick answer — which has cost me and my clients real money before.

That said, I’d encourage you to experiment carefully here. If the budget is too tight, the model can deliver incomplete outputs. As a result, it’s less of an “on/off switch” and more of a dial you need to calibrate for each workflow type.

The Instruction Precision Change: Proceed With Caution

Here’s something that tripped me up, and I want to flag it clearly so you don’t learn it the hard way.

Opus 4.7 is noticeably more literal in following instructions — a behavior shift that will break prompts tuned for 4.6. Where Opus 4.6 interpreted instructions loosely and sometimes skipped steps, Opus 4.7 takes them precisely. Label Rer

In practice, this means that prompts written to work around Opus 4.6’s tendencies may now produce strange or overly rigid outputs. For instance, if you have a prompt that says “write a short email,” Opus 4.7 will write a short email. Specifically, it won’t add the helpful context it might have added before.

Is this a bad thing? Not inherently. On the other hand, if you’ve built automated workflows on top of the older model, budget time for a prompt audit before you flip the switch. I learned this the hard way with a client’s email generation workflow — the outputs were technically correct, but missing the editorial warmth we’d come to rely on Opus 4.6 providing automatically.

Pricing Reality Check: Is It Actually the Same Price?

I promised I’d call out the tokenizer issue, so let’s deal with it directly.

Claude Opus 4.7 uses a new tokenizer. This new tokenizer may use roughly 1.0 to 1.35x as many tokens when processing text compared to previous models — up to roughly 35% more, varying by content. Claude API Docs

Consequently, while the per-token pricing is identical to Opus 4.6, your actual API costs could be higher if you’re running high-volume text workflows. Furthermore, if you’ve set up billing alerts or hard budget caps based on Opus 4.6 spend, you’ll want to revisit those numbers.

That said, Claude Opus 4.7 provides a 1 million token context window at standard API pricing with no long-context premium Claude API Docs — so for teams regularly working with large documents or long conversation histories, that’s a meaningful saving compared to alternatives that charge extra for context.

My honest take: run a test batch of your typical workloads and compare token counts before committing to a full migration. It won’t take long, and it’ll save you a billing surprise.

Who Should Upgrade to Claude Opus 4.7?

Let me give you the clear-cut version, because I know you’re busy:

Upgrade immediately if you are:

- Running agentic or multi-step automation workflows

- Working with visual assets, reports, or screenshots regularly

- Using Claude Code or API integrations for content production

- Managing complex, long-running research or analysis tasks

Stick with Opus 4.6 (for now) if you are:

- Running simple, single-turn content generation at high volume

- Haven’t audited your existing prompts and can’t afford workflow disruption

- On a very tight per-token budget and can’t absorb potential tokenizer variance

Skip Opus altogether if you are:

- A solo creator doing basic blog drafts or social posts — honestly, Claude Sonnet 4.6 is faster, cheaper, and more than capable for that level of work.

Claude Opus 4.7 vs. the Competition

Here’s where I’ll give you the honest picture without the fanboy filter.

Opus 4.7 is ahead of GPT-5.4 and Gemini 3.1 Pro on most agentic and coding benchmarks. However, it does regress on Terminal-Bench 2.0, where GPT-5.4 scores 75.1% versus Opus 4.7’s 69.4%. Label Rer Furthermore, there’s the “Mythos” question — Anthropic has a more powerful model in restricted preview that outperforms Opus 4.7 everywhere, but it’s not publicly available. So for practical purposes, Opus 4.7 is the best generally accessible model on the market right now.

For most marketing and content workflows, the head-to-head differences between Opus 4.7 and GPT-5.4 will be marginal at best. Specifically, where Opus 4.7 clearly wins is in the 80/20 use case I see most often: long, complex, instruction-heavy tasks with multiple interdependent steps. That’s where the agentic improvements really show up in practice.

Final Verdict: Claude Opus 4.7 Review

After extensive hands-on testing, Claude Opus 4.7 is the real deal — specifically for teams using AI as infrastructure, not just a writing shortcut.

The vision upgrade alone is a legitimate reason to explore it. Furthermore, the agentic improvements mean your overnight automation workflows are significantly more likely to actually complete overnight. To be completely honest, I haven’t been this excited about a model release since the jump from Claude 3 to Claude 4.

That said, approach the migration thoughtfully. Audit your prompts, watch the tokenizer costs, and don’t expect your Opus 4.6 workflows to transfer without some tuning.

My verdict: For serious AI-powered marketing workflows, Opus 4.7 earns a strong recommendation. For casual use, you’re probably spending more than you need to.

FAQ: Claude Opus 4.7

Is Claude Opus 4.7 worth it for small businesses? It depends on your use case. If you’re running advanced AI automation or working with complex documents and visuals, yes — the performance gains justify the cost. For simple content tasks, Claude Sonnet 4.6 is a better value.

How much does Claude Opus 4.7 cost? Pricing is $5 per million input tokens and $25 per million output tokens — identical to Opus 4.6. Nxcode However, note that the new tokenizer may increase token usage by up to 35% depending on your content type.

What is the context window for Claude Opus 4.7? Claude Opus 4.7 supports a 1 million token context window with 128k max output tokens Claude API Docs, available at no long-context premium.

Is Claude Opus 4.7 better than GPT-5.4? On most agentic coding and vision benchmarks, yes. On Terminal-Bench specifically, GPT-5.4 still leads. For marketing workflows, the real-world difference is small — both are excellent models.

Where can I access Claude Opus 4.7? Claude Opus 4.7 is available across Claude.ai, the Anthropic API, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry. Nxcode The API model ID is claude-opus-4-7.