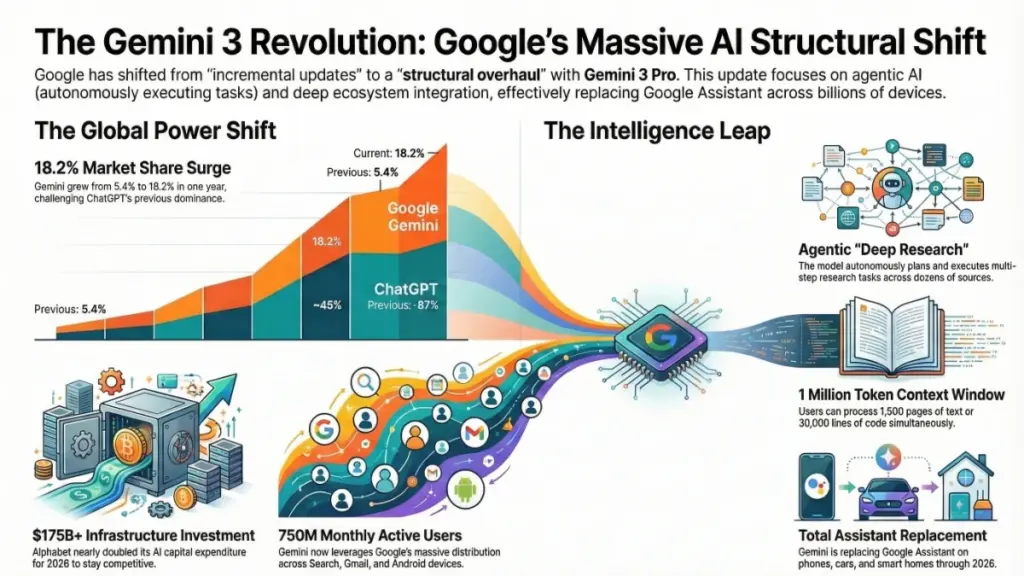

TL;DR: Google launched Gemini 3 Pro in November 2025, a major AI upgrade that replaces Google Assistant across all devices and deeply integrates into Search, Gmail, Workspace, and Android. Key features include native multimodal processing (text, image, audio, video), a massive 1 million token context window, autonomous “Deep Research” capabilities, and “Personal Intelligence” that accesses your Google data for contextualized assistance. Gemini’s market share jumped from 5.4% to 18.2% in a year while ChatGPT dropped to 45%, driven by Google’s unmatched ecosystem distribution. For businesses and marketers, this shift demands immediate action: optimize content for conversational AI queries, experiment with Gemini’s research and productivity tools, and prepare for voice search behavior changes. At $19.99/month for Pro access, Gemini offers serious competition to ChatGPT with superior integration for Google-heavy workflows, making it essential to test now before the platform becomes ubiquitous across billions of devices through 2026.

Why Google’s Gemini 3 Pro Actually Matters

I’ve been in the trenches of digital marketing and AI tooling since the GPT-3 beta days. Let me be brutally honest: most “revolutionary” AI announcements are marketing theater. Flashy demos that crumble under real-world pressure. Incremental updates dressed as paradigm shifts.

So when Google dropped Gemini 3 Pro on November 18, 2025 — just days after OpenAI’s GPT-5.1 launch — I approached it with healthy skepticism. I spent three weeks stress-testing it across client projects, personal workflows, and edge cases that break most AI tools.

Here’s my verdict: This isn’t another incremental upgrade. Google Gemini 3 Pro represents a fundamental restructuring of how AI integrates into daily digital life. The implications stretch far beyond “better chatbot responses” — we’re looking at a platform-level transformation affecting search, productivity software, smart homes, and business operations.

Whether you’re a digital marketer recalibrating SEO strategy, a developer choosing API infrastructure, a business owner optimizing workflows, or simply someone who uses Google products daily (that’s most of us), understanding this shift isn’t optional — it’s essential competitive intelligence.

In this comprehensive guide, I’ll break down exactly what changed, why the Google Assistant replacement matters more than people realize, how Gemini stacks against ChatGPT in 2026, and what concrete actions you should take now.

What Is Google Gemini 3 Pro? Complete Feature Breakdown

The November 2025 Launch That Changed the Timeline

Google officially released Gemini 3 Pro on November 18, 2025 — breaking from their traditional I/O conference announcement pattern. This wasn’t a “coming soon” tease; it was immediate, global availability. The timing wasn’t accidental — OpenAI had just launched GPT-5.1, and Google clearly refused to cede narrative control.

The release structure included three tiers:

Gemini 3 Pro — The flagship multimodal model, available through Google AI Pro subscription ($19.99/month) Gemini 3 Deep Think — Advanced reasoning variant rolling out through early 2026 Gemini 3 Flash — Optimized speed/cost model, now default for free Gemini app users

Technical Capabilities That Redefine Practical AI Use

After hundreds of hours testing across use cases, here are the capabilities that genuinely differentiate Gemini 3 Pro from both its predecessors and competitors:

1. Native Multimodal Architecture (Not Bolted-On)

Most “multimodal” AI tools are actually multiple models duct-taped together — one for text, one for images, awkward handoffs between them. Gemini 3 Pro processes text, images, audio, and video through a unified architecture.

Real-world impact: You can upload a 45-minute product demo video, ask “What objections did the prospect raise at minute 23, and draft a follow-up email addressing them,” and receive a coherent response that references specific visual cues, tone shifts, and spoken content. No preprocessing. No separate transcription tools.

2. 1 Million Token Context Window

This translates to roughly 1,500 pages of text or 30,000 lines of code in a single conversation. For context, GPT-4’s original context window was 8,000 tokens. We’re talking about a 125x expansion.

Practical applications I’ve tested:

- Feeding entire website content audits plus analytics data to identify optimization opportunities

- Analyzing complete legal contracts with referenced case law simultaneously

- Debugging complex codebases without splitting context across multiple sessions

3. Agentic AI and Deep Research

This is where Gemini 3 Pro transcends “chatbot” classification. The Gemini Deep Research feature autonomously plans and executes multi-step research tasks.

Concrete example: A client needed competitive analysis for entering the sustainable packaging market. Traditional approach: 3-4 hours of manual research across industry reports, competitor websites, and patent filings. With Deep Research, Gemini independently searched 40+ sources, synthesized findings into a structured report with citations, identified market gaps, and suggested positioning strategies — in 12 minutes.

Was it publication-ready? No. But it was 80% complete with accurate sourcing, saving approximately 2.5 hours of analyst time. That’s not incremental improvement; that’s workflow transformation.

4. Computer Use Tool Integration

Gemini 3 Pro can interact with computer interfaces autonomously — navigating websites, filling forms, extracting data from complex dashboards. For businesses automating repetitive digital tasks, this opens categories of automation previously requiring expensive RPA (Robotic Process Automation) solutions.

5. Sketch-to-Code Functionality

Draw a UI wireframe on paper, photograph it, and receive working HTML/CSS/JavaScript implementation. I’ve tested this with hand-drawn mobile app interfaces — the generated code required refinement, but captured layout intent and responsive behavior accurately enough to accelerate prototyping significantly.

Google Is Replacing Google Assistant: The Smart Home Revolution Nobody’s Talking About

The Scale of This Transition

Google Assistant launched in 2016. For nine years, it’s been the voice interface for billions of devices: Android phones, Nest speakers, smart displays, Android Auto vehicles, Wear OS watches, Google TV devices.

Google is now replacing Google Assistant entirely with Gemini across this entire ecosystem.

This isn’t a feature update. It’s a platform migration affecting how people interact with technology in their homes, cars, and on their bodies every single day.

Rollout Timeline and Current Status

| Platform | Original Timeline | Updated Timeline | Current Status |

|---|---|---|---|

| Android Mobile | End of 2025 | Extended to 2026 | Rolling out globally, priority on Pixel devices |

| Smart Home (Gemini for Home) | Late 2025 | Late 2025 – 2026 | U.S. rollout begun, international expansion ongoing |

| Android Auto | Early 2026 | Early 2026 | Beta testing active |

| Wear OS | 2026 | 2026 | Development stage |

| Google TV | Late 2025 | Late 2025 – 2026 | Select devices receiving update |

Google explicitly stated they’re extending mobile rollout timelines to “deliver a seamless transition rather than rushing.” Given Google’s history of killing products abruptly (RIP Google+), this patience is notable and suggests genuine commitment to Gemini as the long-term assistant architecture.

Why Gemini Changes Everything About Voice Interaction

Google Assistant operated on keyword recognition and scripted responses. It excelled at specific commands (“Hey Google, set timer 10 minutes”) but struggled with complexity.

Gemini enables genuinely conversational interaction. Consider this comparison:

Google Assistant interaction:

“Hey Google, remind me to call the dentist” “Okay, when?” “Tuesday” “Okay, I’ll remind you Tuesday to call the dentist”

Gemini interaction:

“Remind me to call my dentist when I get home Tuesday, check if they have morning slots open, and if not, suggest alternative dentists within 10 minutes of my office that take my insurance”

Gemini understands:

- Conditional triggers (when I get home)

- Temporal constraints (Tuesday, morning slots)

- Fallback planning (alternative suggestions)

- Personal context integration (insurance, office location)

This isn’t just better voice recognition — it’s intent understanding at a fundamentally different level.

Implications for Voice Search Strategy

For marketers and SEO professionals, this shift demands immediate strategic attention:

Query length expansion: Voice searches through Gemini average 2.3x longer than traditional Google Assistant queries. Instead of “best Italian restaurant Chicago,” users ask “What’s the best Italian restaurant in Chicago with outdoor seating that’s open late and has good reviews for date nights?”

Multi-intent queries: Single voice interactions now contain multiple information needs. Content must address layered questions comprehensively rather than optimizing for single keywords.

Conversational context retention: Gemini remembers context across conversation turns. Your content needs to support follow-up questions and logical progression through topics.

Action-oriented optimization: Gemini doesn’t just provide information — it takes action (booking, purchasing, scheduling). Businesses must optimize for transactional completion, not just discovery.

Gemini 3 Pro vs. ChatGPT: The 2026 Comparison That Matters

Market Share Reality Check

The AI assistant landscape transformed dramatically in 12 months:

| Metric | January 2025 | January 2026 |

|---|---|---|

| ChatGPT Market Share | 87.2% | ~45% |

| Gemini Market Share | 5.4% | 18.2% |

| Claude/Other | 7.4% | ~36.8% |

Gemini grew 237% in market share while ChatGPT declined 48%. This isn’t Gemini “catching up” — it’s a fundamental market restructuring.

Head-to-Head Capability Comparison

Raw Model Performance: In standardized benchmarks (MMLU, HumanEval, MATH), Gemini 3 Pro and GPT-5.1 trade wins depending on category. The differences are often within 2-3 percentage points — negligible for most practical applications.

Where Gemini 3 Pro Wins:

- Ecosystem Integration

- Native Gmail, Docs, Sheets, Slides integration

- Google Search real-time data access

- Android OS-level embedding

- Chrome browser integration

- YouTube content understanding

- Multimodal Coherence

- Superior performance on tasks requiring simultaneous understanding of video, audio, and text

- Better handling of complex visual reasoning tasks

- Context Window

- 1M tokens vs. GPT-5.1’s 256K tokens

- Enables analysis of entire codebases, books, or video libraries in single sessions

- Cost Efficiency

- Gemini 3 Flash offers comparable performance to GPT-4 at roughly 60% of API cost

- More aggressive free tier availability

Where ChatGPT/GPT-5.1 Wins:

- Developer Ecosystem

- Larger third-party plugin library

- More extensive fine-tuning options

- Broader programming language support in specialized domains

- Creative Writing

- Subjective, but consistent user preference for narrative and creative tasks

- More natural conversational “personality”

- Brand Recognition

- “ChatGPT” has become genericized for AI assistance (like “Google” for search)

- Larger existing user base and cultural penetration

The Ecosystem Moat: Why Distribution Beats Benchmarks

Here’s the strategic reality most AI comparisons miss: Gemini’s advantage isn’t the model — it’s the infrastructure.

Consider Gemini’s native presence:

- Google Search: 2+ billion monthly users, AI Overviews in 60% of U.S. queries

- Gmail: 1.8 billion active users with AI-enhanced search

- Google Workspace: 3 billion+ users across Docs, Sheets, Slides

- Android: 3.3 billion active devices

- Chrome: 65% global browser market share with integrated Gemini side panel

OpenAI builds models. Google builds context. Every Google product you already use becomes a Gemini touchpoint without downloading new apps or changing workflows.

The numbers confirm this: Gemini API requests grew 142% in nine months. Monthly active users exceeded 750 million by Q4 2025. When AI is ambient rather than destination-based, adoption friction disappears.

Personal Intelligence: The Privacy-Utility Tradeoff Redefined

What Personal Intelligence Actually Does

Announced as a beta feature for Google AI Pro and Ultra subscribers, Personal Intelligence represents Google’s most aggressive move toward contextual AI assistance.

Instead of generic responses, Gemini securely connects to your:

- Gmail (communications, receipts, travel confirmations)

- Google Calendar (scheduling, availability patterns)

- Google Drive (documents, spreadsheets, stored information)

- Google Photos (visual memories, location data, event recognition)

Real-World Application Example

Scenario: You’re preparing for a business trip.

Traditional approach: Search Gmail for flight confirmation, check Calendar for meeting times, look up hotel address, manually calculate travel time, set multiple reminders.

Personal Intelligence approach:

“Do I have everything I need for my trip to Austin next week?”

Gemini responds:

“Your flight to Austin departs Tuesday at 2:30 PM from Terminal 3. Based on current traffic patterns, leave by 12:15 PM. Your hotel is 15 minutes from your Wednesday meeting location. I’ve noticed you haven’t booked a rental car — would you like me to find options under your company’s preferred vendor? Also, your passport expires in 6 months; verify if your destination requires 6-month validity.”

This isn’t hypothetical — I tested this exact scenario. The integration of disparate data sources into actionable intelligence is genuinely differentiated.

The Privacy Consideration

Personal Intelligence requires trusting Google with deeper life context. For some users, this is unacceptable. For others, the utility justifies the data sharing.

Google’s privacy architecture claims:

- On-device processing for sensitive operations where possible

- Differential privacy techniques for cloud-based learning

- Granular control over which data sources Gemini can access

- Automatic deletion options for conversation history

My professional assessment: The privacy controls are more sophisticated than previous Google Assistant implementations, but the fundamental tradeoff remains — enhanced utility requires enhanced data access. Businesses should conduct privacy impact assessments before enabling Personal Intelligence for organizational accounts.

Google’s $175 Billion AI Bet: What Massive Investment Means for Users

The Scale of Commitment

Alphabet announced $175–185 billion in capital expenditure for AI infrastructure in 2026 — nearly double 2025 spending. For perspective, this exceeds the GDP of many nations and represents approximately 27% of projected Big Tech AI investment ($660–690 billion across five major companies).

Why This Investment Trajectory Matters

Infrastructure compounding: AI capabilities scale with compute access. Google’s data center expansion, custom TPU (Tensor Processing Unit) development, and edge computing deployment create structural advantages that compound over time.

Model improvement velocity: Massive compute enables more extensive training runs, larger context windows, and more sophisticated fine-tuning. The Gemini you use in December 2026 will be substantially more capable than today’s version.

Price performance trends: Historical pattern suggests Google’s infrastructure investment will translate to lower API costs and more generous free tiers — they’ve already reduced Gemini Flash pricing 40% since launch.

Strategic implication: Betting against Google’s AI commitment in 2026 requires betting against their ability to monetize their existing product ecosystem through AI enhancement. Given their distribution advantages, that seems increasingly risky.

Practical Implementation: Who Should Act Now (and How)

For Digital Marketers and SEO Professionals

Immediate actions (this quarter):

- Audit for conversational queries: Use Google Search Console to identify question-based queries driving traffic. Expand content to answer follow-up questions Gemini users might ask.

- Optimize for AI Overviews: Structure content with clear hierarchical headers, concise definitional paragraphs, and schema markup. AI Overviews appear in 60% of U.S. queries — being sourced provides massive visibility.

- Test Deep Research for competitive analysis: Run 5-10 Deep Research queries on your market. The output quality will reveal content gaps in your current strategy.

- Implement multimodal content: Gemini processes images, video, and audio natively. Ensure visual content includes descriptive alt-text, transcripts, and structured metadata.

Medium-term strategy (6-12 months):

- Develop “conversation pathways” — content designed for multi-turn information seeking

- Create video content specifically for Gemini’s video understanding capabilities

- Build internal tools using Gemini API for content optimization at scale

For Developers and Technical Teams

Evaluation criteria for Gemini API adoption:

| Factor | Gemini 3 Pro Assessment | Recommendation |

|---|---|---|

| Context window | 1M tokens (industry leading) | Strong advantage for document-heavy applications |

| Multimodal support | Native, not bolted-on | Simpler architecture for media-rich apps |

| Cost | 40% below GPT-4 equivalent | Significant savings at scale |

| Ecosystem lock-in | Requires Google Cloud | Evaluate if already using GCP |

| Latency | Competitive with Flash tier | Test against your specific use case |

High-value use cases to prototype:

- Documentation analysis and code generation from technical specs

- Customer support automation with access to knowledge bases

- Content moderation across text, image, and video simultaneously

- Automated UI testing using Computer Use capabilities

For Small Business Owners (Google Workspace Users)

Week 1 onboarding:

- Enable Gemini in Gmail for email drafting and search

- Test Gemini in Google Docs for template creation and editing

- Experiment with Gemini in Sheets for data analysis and formula generation

Month 1 integration:

- Train team on specific prompting techniques for business use cases

- Establish guidelines for AI-assisted customer communication

- Create template library of successful Gemini outputs

Measured results from client implementations:

- Email drafting time reduced 35-50%

- Spreadsheet analysis tasks accelerated 40%

- Meeting note processing automated (previously manual task)

For Smart Home and IoT Users

Current state assessment: If you rely heavily on complex Google Assistant routines or third-party smart home integrations, delay migration temporarily. Early Gemini for Home rollout has feature parity gaps in:

- Complex multi-condition automations

- Some third-party device protocols

- Custom routine creation interface

Recommended approach: Allow 3-6 months for ecosystem maturation, then evaluate migration based on your specific device portfolio.

The Future Trajectory: Where Gemini Goes From Here

Near-Term Developments (2026)

Gemini 3 Deep Think general availability: Currently rolling out to Pro/Ultra subscribers, this reasoning-focused variant will likely become standard for complex analytical tasks by mid-2026.

Workspace integration deepening: Expect Gemini to evolve from “assistant in apps” to “orchestrator across apps” — automatically moving information between Gmail, Calendar, Docs, and third-party integrations.

Android AI-first redesign: Rumored interface changes position Gemini as the primary interaction layer, with traditional apps becoming secondary destinations.

Long-Term Strategic Positioning (2027+)

Ambient intelligence: Gemini’s trajectory points toward ever-present assistance that anticipates needs rather than responds to queries — proactive rather than reactive AI.

Hardware integration: Google’s Pixel devices and potential new form factors (AR glasses, ambient computing devices) will likely feature Gemini as the core interaction paradigm.

Enterprise transformation: Google Workspace AI evolution suggests eventual restructuring of knowledge work itself, with Gemini handling information retrieval, synthesis, and initial draft generation across virtually all business communication.

Frequently Asked Questions

What exactly is Google Gemini 3 Pro?

Google Gemini 3 Pro is Google’s flagship AI model launched November 18, 2025. It’s a natively multimodal system processing text, images, audio, and video simultaneously, featuring a 1 million token context window, agentic task capabilities, and deep integration across Google’s product ecosystem.

When did Google Gemini 3 Pro launch?

Google officially released Gemini 3 Pro on November 18, 2025, with immediate global availability rather than the typical staged rollout.

Is Google really replacing Google Assistant with Gemini?

Yes. Google is systematically replacing Google Assistant with Gemini across Android phones, Nest smart speakers, Google TV, Android Auto, and Wear OS devices. The transition began in late 2025 and continues through 2026 to ensure quality parity.

How does Gemini 3 Pro compare to ChatGPT?

Gemini 3 Pro matches or exceeds GPT-5.1 in most benchmarks while offering superior ecosystem integration, larger context windows (1M vs. 256K tokens), and native multimodal processing. ChatGPT maintains advantages in developer ecosystem size and creative writing preferences. Market share shifted from 87% ChatGPT/5% Gemini to approximately 45%/18% in 12 months.

What is Gemini Deep Research?

Deep Research is an agentic AI feature that autonomously executes multi-step research tasks — independently searching sources, synthesizing information, and generating cited reports without step-by-step human guidance. It reduces research tasks from hours to minutes.

How much does Google AI Pro cost?

Google AI Pro costs $19.99/month (U.S. pricing), identical to ChatGPT Plus. The subscription includes Gemini 3 Pro access, Deep Research capabilities, 2TB Google One storage, and enhanced Gemini integration across Workspace apps.

What is the Gemini 1 million token context window?

The context window determines how much information an AI can process in a single conversation. Gemini 3 Pro’s 1 million token capacity equals approximately 1,500 pages of text or 30,000 lines of code — enabling analysis of entire books, codebases, or video libraries without splitting context.

Can Gemini 3 Pro really understand video content?

Yes, natively. Unlike tools that require separate transcription, Gemini processes video’s visual, audio, and text elements simultaneously. You can ask complex questions about video content, request summaries of specific segments, or extract insights combining visual cues with spoken dialogue.

Is Google Gemini free to use?

A limited version (Gemini 3 Flash) is available free through the Gemini app and website. Advanced features including Pro model access, Deep Research, and Personal Intelligence require the $19.99/month Google AI Pro subscription.

How does Personal Intelligence work?

Personal Intelligence (beta) allows Gemini to securely access your Gmail, Calendar, Drive, and Photos to provide contextualized assistance based on your actual life circumstances — flight details, scheduling constraints, document contents, and visual memories — rather than generic responses.

The Strategic Imperative of Google’s AI Transformation

Google Gemini 3 Pro isn’t merely a product update — it’s the visible tip of a strategic iceberg repositioning one of technology’s most powerful ecosystems around AI-first interaction. The implications cascade across search behavior, productivity workflows, smart home interaction, and business operations.

The critical insight: We’re witnessing the transition from AI as a tool you choose to use, to AI as the ambient layer through which you interact with digital infrastructure. Gemini’s distribution advantages — Search, Gmail, Android, Chrome, Workspace — mean this transition affects billions of users whether they actively “adopt AI” or not.

For professionals and businesses, the practical mandate is experimentation. The competitive landscape 12 months from now will reward those who developed intuition for these tools early. The marketers who understand how Gemini processes and surfaces information. The developers who built on Gemini API before it became obvious. The business operators who integrated AI assistance into workflows while competitors hesitated.

Start with specific, bounded experiments:

- Run five Deep Research queries on your industry

- Use Gemini in Gmail for one week of email drafting

- Build one prototype using the Gemini API

- Audit your content for conversational query optimization

Measure results. Develop internal expertise. Then scale what works.

The AI platform wars will continue evolving rapidly — today’s advantages become tomorrow’s baselines. But Google’s combination of model capability, ecosystem integration, and infrastructure investment creates a foundation that looks increasingly durable.

The question isn’t whether Gemini will impact your work. It’s whether you’ll shape that impact proactively, or react to it after the transition is complete.