TL;DR: After testing 40+ machine learning platforms in real marketing workflows, I’ve found that the best ML software depends entirely on your team’s skills and existing infrastructure rather than feature lists—Google Vertex AI excels for GCP-native teams but has a steep learning curve, DataRobot delivers fast results for enterprise budgets but costs six figures annually, H2O.ai offers powerful open-source AutoML for technically capable teams on tight budgets, Amazon SageMaker suits AWS-centric organizations though pricing surprises are common, and Weights & Biases is essential for teams doing custom model development who need experiment tracking. Avoid no-code platforms for complex business decisions, always test with your real data before buying, and budget 60-80% of project time for data preparation regardless of which tool you choose.

Introduction: Why 90% of Machine Learning Software Reviews Fail You

Let’s cut through the noise: most machine learning software reviews are worthless. They’re written by content farms regurgitating feature lists or affiliate marketers chasing commissions. After spending four years embedding ML tools into real marketing operations—not running toy demos—I can tell you the gap between marketing promises and reality is staggering.

Last quarter, I rescued a mid-size e-commerce brand that burned $18,000 on an ML platform their previous agency “thoroughly evaluated.” That evaluation? A 20-minute vendor demo and skimming G2 reviews. Result: demoralized teams, embarrassingly inaccurate predictions, and customer support that vanished when problems arose.

This guide is what I wish existed when I started—brutally honest assessments, real-world context, and clear guidance on who each tool actually serves. Whether you’re a solo analyst exploring ML or a marketing ops director justifying six-figure investments, this review cuts through the hype.

The golden rule: The best ML tool isn’t the one with the most features—it’s the one your team still uses 90 days after launch.

What Actually Matters When Choosing Machine Learning Software

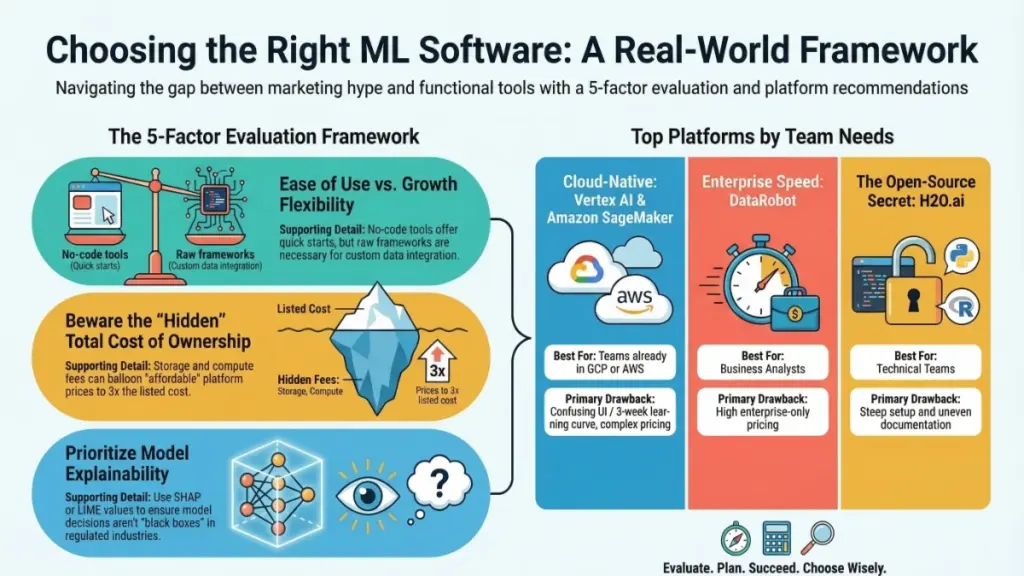

Before comparing platforms, understand the five decision factors most buyers ignore:

1. The Usability vs. Flexibility Paradox

Here’s the tension vendors won’t mention: easiest-to-start tools often hit walls fastest. Platforms like DataRobot and Google AutoML deliver working models in hours, but choke on custom feature engineering or proprietary data sources. Raw frameworks like TensorFlow offer unlimited flexibility—and unlimited debugging nightmares.

My recommendation: Match complexity to capability. Dedicated data scientists need flexibility; marketing ops teams with basic Python skills need low-code solutions they can grow with.

2. Integration Reality Check

I cannot stress this enough: verify integrations first. I once recommended a platform that couldn’t natively connect to the client’s Salesforce instance. Three months of workaround development later, the lesson was learned.

Action item: Map where your data lives. Confirm native connectors exist before evaluating features.

3. True Total Cost of Ownership

Sticker prices lie. Factor in compute costs (especially cloud training), per-seat fees, API call charges, and data storage costs. Some platforms charge per GB monthly—devastating with large training datasets. I’ve seen “affordable” tools hit 3x their listed price within months.

4. Model Explainability Requirements

Regulated industries and marketing stakeholders demand transparency. SHAP values, LIME explanations, or feature importance dashboards aren’t optional—they’re essential. Black-box models you can’t interrogate become liabilities when executives ask “why did it predict that?”

5. Support Quality and Documentation Currency

I used to consider documentation a nice-to-have. Now it’s a dealbreaker. When production fails at 11 PM before a client presentation, you need current docs or responsive support. Prioritize platforms with active communities, live chat, and documentation updated within six months.

Top Machine Learning Software Platforms: Brutally Honest Reviews

Google Vertex AI: Best for GCP-Native Organizations

If you’re already Google Cloud infrastructure, Vertex AI deserves serious consideration. Its AutoML excels at image classification, natural language processing, and tabular data predictions. The MLOps pipeline orchestration improved dramatically—what felt clunky eighteen months ago now rivals dedicated platforms.

Strengths:

- Seamless BigQuery integration

- Robust AutoML capabilities

- Comprehensive monitoring dashboards

- Competitive per-compute pricing

Critical Weakness: The user interface embodies Google’s design philosophy—powerful but perplexing. I’ve onboarded five teams; every single one faced identical three-week learning curves. Documentation exists in abundance but assumes GCP familiarity. Without existing Google Cloud experience, ramp-up time is substantial.

Ideal for: Mid-to-large data engineering teams already committed to GCP seeking unified MLOps platforms.

Pricing: Pay-as-you-go compute with variable training costs. Budget carefully based on model complexity and dataset sizes.

DataRobot: Best for Business Users Needing Rapid Results

DataRobot earned its reputation honestly. For business analysts or marketing ops managers needing predictive models without PhD-level expertise, this is the most reliable shortcut available. Upload data, define your prediction target, and watch it parallel-test dozens of algorithms, serving ranked model leaderboards by performance. It genuinely delivers.

Strengths:

- Industry-leading AutoML

- Exceptional explainability features (DataRobot’s standout advantage)

- Solid deployment tooling

- Strong enterprise governance capabilities

Critical Weakness: Pricing is painful. We’re talking enterprise contracts typically starting in six figures annually. The lack of public pricing transparency frustrates evaluation. For smaller teams without dedicated data science budgets, cost-benefit calculations rarely justify the investment.

Ideal for: Enterprise teams prioritizing speed-to-value with budget flexibility. Excellent for regulated industries requiring built-in model explainability.

H2O.ai: Best Open-Source Alternative for Budget-Conscious Teams

H2O represents machine learning’s best-kept secret. The open-source H2O-3 platform delivers AutoML power comparable to DataRobot at zero licensing cost. The trade-off: self-managed infrastructure and steeper learning curves. H2O Driverless AI (enterprise version) bridges gaps with polished interfaces but reintroduces pricing considerations.

Strengths:

- Genuinely excellent AutoML algorithms (particularly tabular data)

- Strong Python and R API support

- Active open-source community

- Cost-effective for technically capable teams

Critical Weakness: Open-source setup exceeds competitor complexity. Documentation quality varies significantly—some sections are comprehensive, others assume existing expertise.

Ideal for: Python/R-proficient data science teams seeking powerful AutoML without enterprise pricing. Excellent for lean startups.

Amazon SageMaker: Best for AWS-Centric Operations

SageMaker serves as Amazon’s answer to Vertex AI, and it’s genuinely mature. Studio interface improvements over two years transformed usability. Feature Store excels for teams sharing pipelines across models. Clarify (bias detection and explainability module) implements responsible AI workflows better than most competitors.

Strengths:

- Deep AWS ecosystem integration

- Excellent MLOps tooling

- Strong feature engineering capabilities

- Reliable real-time inference performance

- Solid enterprise support infrastructure

Critical Weakness: Pricing complexity creates genuine surprises. I’ve watched AWS bills shock clients repeatedly. Compute, storage, inference endpoints, and data transfer fees compound aggressively without vigilant monitoring. Implement billing alerts immediately upon deployment.

Ideal for: AWS-committed teams wanting end-to-end MLOps without infrastructure management overhead.

Weights & Biases (W&B): Best for Experiment Management

W&B occupies a different category—less model building, more experimentation governance. When data scientists write custom training code but struggle with reproducibility, hyperparameter scaling, or distributed team collaboration, W&B becomes essential.

Strengths:

- Outstanding experiment tracking interface

- Excellent hyperparameter sweep capabilities

- Strong team collaboration features

- Generous free tier for small teams

- Exceptional documentation quality

Critical Weakness: This isn’t a model construction tool. If you need AutoML or end-to-end pipelines, W&B won’t satisfy those requirements. It complements training frameworks rather than replacing them.

Ideal for: Custom model development teams seeking systematic experimentation processes. Outstanding value for research-intensive operations.

The No-Code ML Platform Reality Check

The no-code segment deserves special attention because hype massively outpaces reality. Platforms like Obviously.AI, Akkio, and Lobe promise ML democratization that’s partially true—and partially misleading.

The honest assessment: For simple predictions on clean tabular data—churn forecasting, lead scoring, basic demand prediction—no-code platforms deliver genuinely functional models. I’ve deployed lead scoring systems for small marketing teams using Akkio in under two hours. For appropriate use cases, they work.

The limitations emerge with messy real-world data: missing values, inconsistent formats, complex relationships. Feature engineering capabilities constrain creativity, available model types limit sophistication, and explainability features often lack depth. You obtain results, but not always trustworthy enough for high-stakes decisions.

My analogy: No-code ML resembles food processors—excellent for intended purposes, but inadequate as chef’s knives.

Strategic recommendation: Deploy no-code platforms to validate ML’s potential for your specific problems. When results prove promising, graduate to sophisticated platforms. Never base irreversible business decisions solely on no-code outputs.

Critical Mistakes That Destroy ML Implementations

After witnessing dozens of failed deployments, these patterns recur consistently. Avoid them:

Starting with tools instead of problems. Teams spend months evaluating platforms before clearly defining prediction targets or optimization goals. Nail your problem statement first.

Underestimating data preparation. Data cleaning and preparation consume 60-80% of project timelines on most ML initiatives. Budget accordingly.

Ignoring model drift. Models trained on historical data behave differently on current data. Build monitoring and retraining into initial workflows, not as afterthoughts.

Choosing impressive demos over practical tools. Flashiest demos often belong to platforms with steepest production learning curves. I learned this through expensive experience.

Skipping free tier validation. Major platforms offer free tiers or trials. Use them for minimum two weeks on genuine (not toy) problems before committing financially.

Excluding end users from evaluation. Analysts and marketers using model outputs daily must participate in tool selection. Their workflow compatibility matters equally with model performance.

The 4-Week ML Software Evaluation Framework

This methodology, refined through years of client work, prevents expensive mistakes:

Week 1: Definition and Shortlisting

Document your use case in one sentence. Identify 3-5 platforms explicitly supporting that application. Verify data source connectivity. Eliminate options with opaque pricing unverifiable against budgets.

Week 2: Hands-On Validation

Use your actual data—not sample datasets. Execute identical prediction tasks across platforms. Time raw-data-to-deployable-model durations. Document every friction point. Test support channels with genuine technical questions.

Week 3: Stress Testing

Attempt platform destruction. Upload messy data. Push volume limits. Test production-required integrations. Involve actual end users and observe unassisted interactions.

Week 4: TCO Analysis and Decision

Construct 12-month cost models including compute, storage, seats, and professional services. Compare against expected value generation. Decide—and document reasoning for future reference.

Key Takeaways: Finding Your Perfect ML Software Match

After four years observing ML implementations succeed and fail, one truth dominates: optimal machine learning software isn’t determined by benchmark scores or feature matrices, but by organizational fit—alignment with team skills, data infrastructure, use cases, and budgets.

Four non-negotiable principles:

- Match complexity to expertise: No-code for business users, AutoML for analytics teams, open-source frameworks for data scientists.

- Prioritize integrations and TCO over unused features.

- Validate with real data and actual problems before spending.

- Plan for drift and maintenance from day one—where most implementations silently fail.

Your immediate action: Select one deferred use case—churn prediction, lead scoring, demand forecasting—and initiate free trials on two platforms from this review. Two weeks of hands-on testing teaches more than hours of review reading.

Frequently Asked Questions

What’s the best machine learning software for beginners?

Non-technical users should explore DataRobot or Google Vertex AI AutoML as accessible enterprise options. For zero-cost entry points, H2O.ai’s open platform and Google Colab with scikit-learn cover substantial ground. Select tools matching current capabilities, not aspirational skill levels.

What does machine learning software cost?

Ranges vary enormously. Open-source frameworks (TensorFlow, PyTorch, scikit-learn) are free beyond compute costs. Cloud AutoML platforms (Vertex AI, SageMaker) operate pay-as-you-go, typically hundreds to thousands monthly depending on usage. Enterprise solutions like DataRobot require six-figure annual commitments. Weights & Biases offers generous free tiers and reasonable team pricing around $50/seat/month.

Can machine learning work without coding skills?

Yes, with important caveats. No-code platforms (Akkio, Obviously.AI) handle simple predictions on clean data without programming. However, complex problems, messy data, or custom logic requirements expose limitations rapidly. Even user-friendly platforms require basic data literacy—understanding data meaning and model objectives—to prevent costly errors.

How quickly does ML software deliver value?

No-code platforms with clean data produce working models within hours. Realistically, tool selection through production-ready models delivering business value requires four to twelve weeks, depending on data quality, team expertise, and use case complexity. Data preparation time remains the dominant variable.

Is open-source ML software competitive with paid platforms?

For model performance, often yes—or superior. Scikit-learn and XGBoost power many paid platforms internally. Differences emerge in infrastructure, interfaces, deployment tooling, and support. Paid platforms save time and reduce operational complexity. Open-source saves money but demands greater engineering investment. Neither dominates universally; optimal choice depends entirely on team composition and priorities.